Is AI Islamophobic?

This is a story about the day I met a Somali girl named Ifrah and she was very oppressed. By Bhakti Shringarpure.

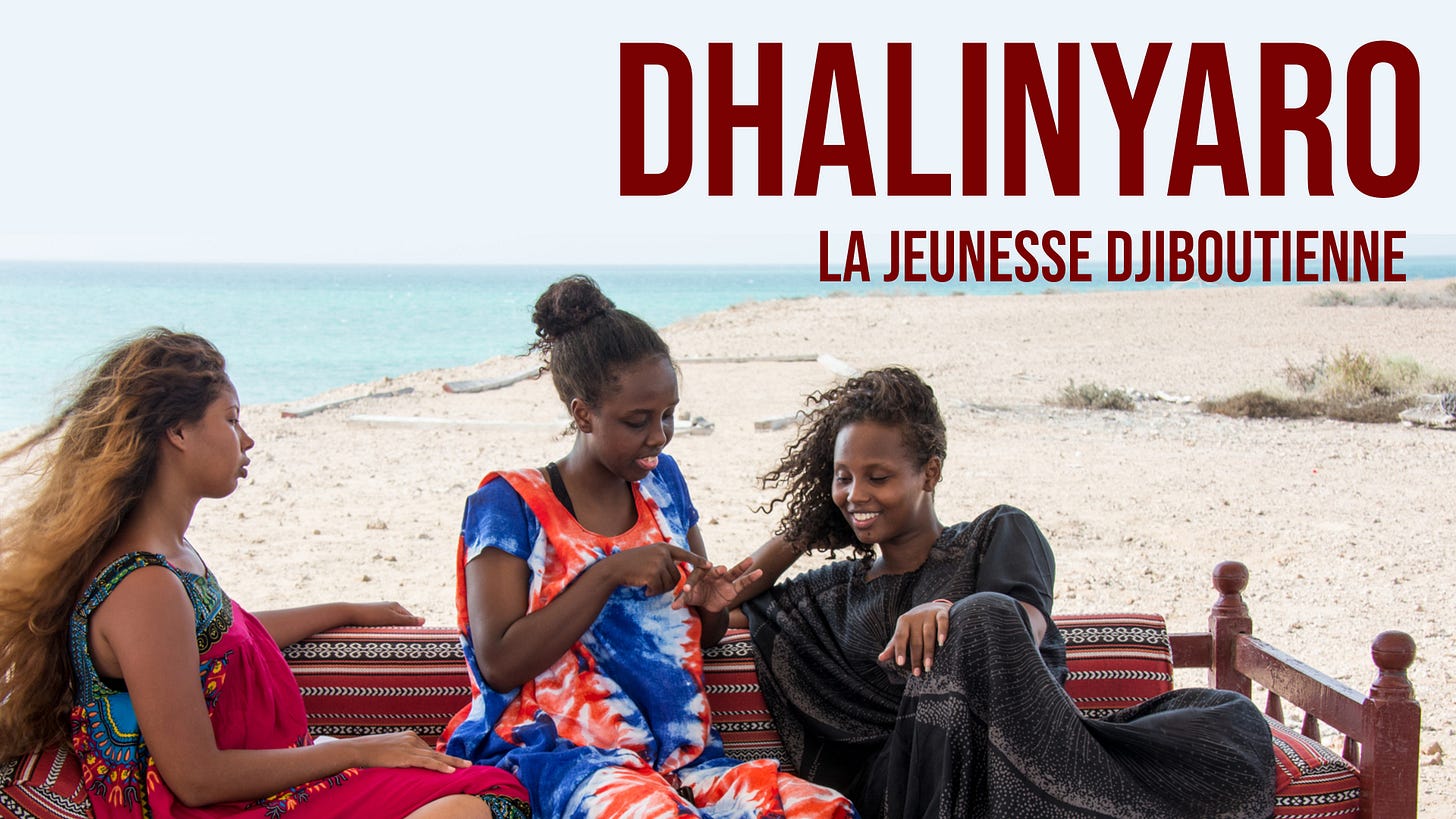

I teach an online course on feminist cinema, and students have to watch about a dozen films from all over the world to tackle troubled representations of gender and sexuality, and to explore topics like girlhood, queer and transgender narratives, and feminist resistance. In the last few years, I have often assigned a beautiful film from Djibouti called Dhalinyaro (Youth). A debut feature film by Black, Muslim and Somali director Lula Ali Ismail, Dhalinyaro tells the story of three friends coming of age in the city of Djibouti.

We get to know the young women —Deka, Hibo, and Asma—as they prepare for their high school exams, hang out together, and navigate romantic relationships. A core issue in the film is the class difference between the three friends, which will determine their life trajectories. One of them might be forced to get a job and give up on education altogether while the wealthy one is already planning to take off to university in Paris. The Franco-Somali film is set in a Muslim country, and cultural markers of Islam are part of the film’s universe.

The first time I taught this film, it was to a large lecture class of 200 students, and even though there was no real discussion time, some students commented on liking the film because the protagonists overcame oppressive Muslim traditions in an oppressive Muslim country. I was able to correct the students in person and remind them that this theme - or stereotype, really - does not exist in the film. If anything, the film ultimately explores universal challenges about girlhood and friendships across class differences while depicting risqué themes like a teen miscarriage and an affair with an older married man.

The next two times I taught Dhalinyaro, the film was assigned for online classes and I did not have the privilege of tackling these prejudices in person. Last year, for a short-answers assignment about the film, I noticed that several students responded by making up an alternate plot, insisting that these three women are depicted as overcoming oppression in their traditional Muslim society. One of them wrote about a wholly made-up character called “Fatima.”

It was frustrating, but not shocking, because I am aware that Western society suffers from chronic levels of Islamophobia. Either Muslims are depicted as violent and extremist, or their women are in need of saving. In fact, savior narratives about Muslim women are ingrained in the West and Western media, blasted through news, films, books, and without a doubt, taught in schools and university classrooms, too.

During this academic year, I assigned Dhalinyaro again, and the responses to a similar short-answers assignment proved disastrous. In a class of 40 students, nine made up a character that did not exist in the film. This character had a very Somali name: Ifrah. Fourteen students fabricated the plot. Some wrote about protagonists having to choose an oppressive Muslim arranged marriage, while others wrote, as usual, about the women overcoming tradition and, yet again, oppression.

This time around, the culprit was obvious: AI. Even though this is an example of an “AI hallucination,” it was me who felt I was hallucinating. I came across Ifrah and the fabricated stereotypical plot so many times that I began to doubt that I might have missed something. It all sounded so believable. I felt like I was losing my mind and rewatched the film. I did not find Ifrah.

I am aware that the use of AI is common and widespread, and educators are fed up with seeing entire assignments written up by AI platforms. It has also been a bit sickening to see the extent to which educators themselves are being persuaded to use AI to generate assignments, lesson plans, lectures and so on. It’s a vicious loop. I have had a distance from academia over the past three years, and have been spared a lot of the institutional back and forth on this subject.

Yet another reason that I have not had to contend with AI is because I tend to teach works of literature and film that AI has not yet incorporated into its system. A mixed blessing, to say the least. And I learned this the hard way.

Several months ago, I used ChatGPT for the first time. I have been working on a biography of Sudanese writer Leila Aboulela, (forthcoming this September via Routledge) and as I rushed to write the final chapter, I could not remember a particular plot point in one of her novels. I asked ChatGPT a couple of different questions, and it did the same two things that my students must have encountered: It fabricated a character with a Muslim sounding name, and it also made an error in my plot question, telling me that this character went on to live in the novel even though I clearly recalled that he had died. I told ChatGPT that this character does not exist, and it told me that it was so sorry to disappoint me, adding that it will try harder in the future. Disarming and sweet. I fell for it. I let it go.

I would soon learn, however, that AI’s racism runs deeper.

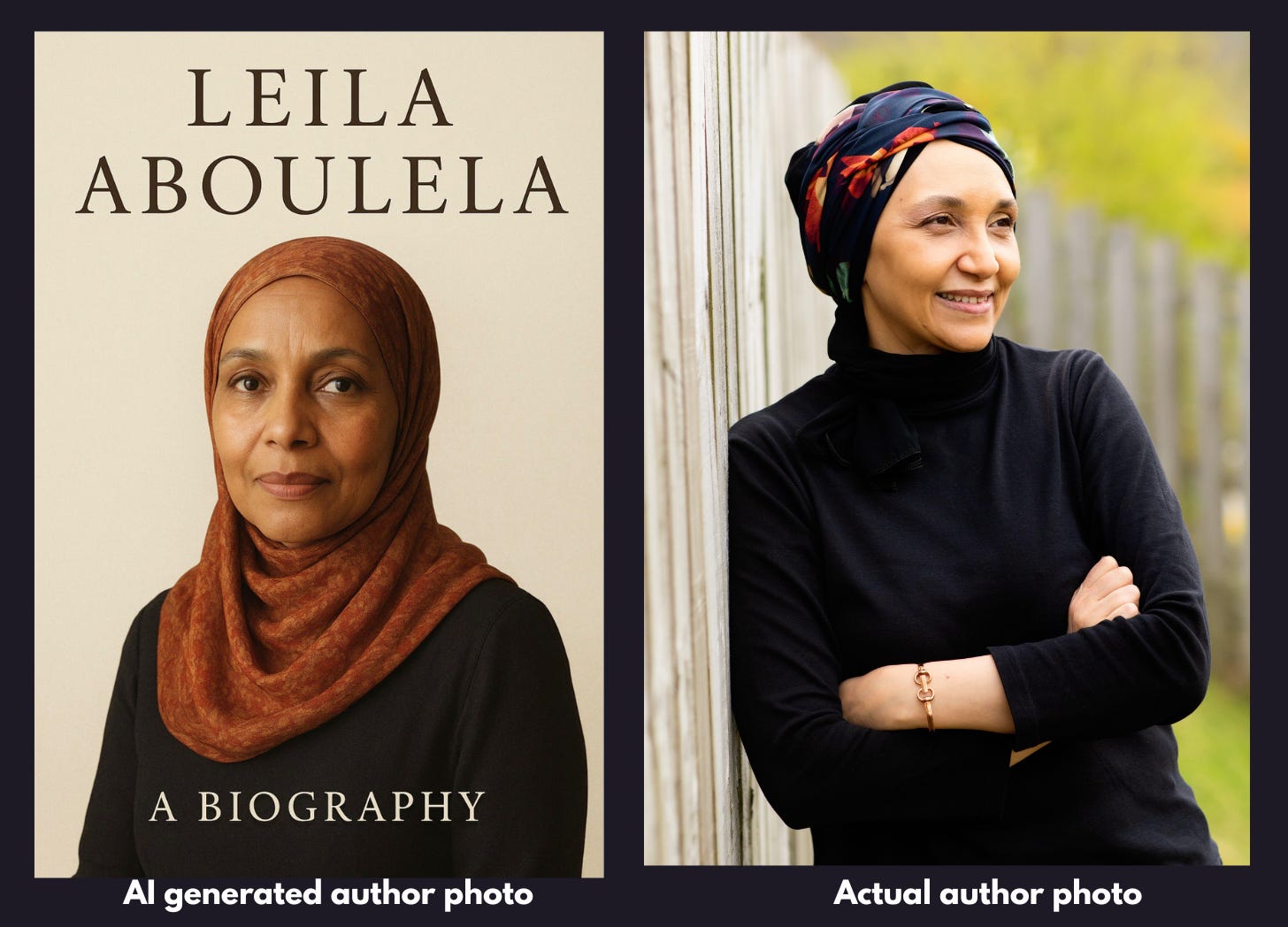

A few weeks later, I was in discussions with the publisher about the cover image for my Leila Aboulela biography, and the series co-editor and friend, Lily Saint, texted me the art that she had generated on an AI platform while fiddling around for cover ideas. We could barely believe it.

The style of the head scarf and the darker skin AI selected for this depiction, I’ve learned, are typical features of how AI incorporates stereotypes of religion and race based, in this case, on the name only. But this made no sense to me, given that Aboulela is the author of several novels, and has been reviewed and profiled in multiple media outlets. Several dozen academic articles have been written about her, she has awards and booklist mentions, and has a strong presence in video and audio platforms. She is literally all over the Internet. All in English too. AI platforms, however, had not ingested any of this. And racist outputs run amok.

Racial capitalism is at the core of AI infrastructure. We have been hearing about how it’s having ruinous effects on the environment because AI data centers require billions of cubic liters of drinking water in order to cool the servers, and their carbon emissions levels are disastrous. And of course, these data centers are only built in poor neighborhoods.

My meagre experiments with AI have illustrated what many have told me: AI will grow so strong that one day, it will appear to have a mind of its own, but that for now, the logic is human: It mostly regurgitates what it has been fed.

AI thus becomes a reflection of all that is anti-Muslim in our culture —it is fed on media and art that are controlled by and favor white publications, white media perspectives, white visuals — so it is not wonder that it repackages and vomits up those precise racist perspectives. As we contend with monstrous levels of anti-Muslim hatred in the world today, it is no surprise that the AI we get in the Western world is also Islamophobic.

Love and solidarity❤️🔥

Bhakti Shringarpure

Book Clubs are back!

May 7, 2026: The October 7th Narrative and the Unmasking of Mainstream Media

Join Madhuri Sastry and Bhakti Shringarpure for a live recording of It’s Not You, It’s The Media with guest Robin Andersen, author of The Complicit Lens: US Media Coverage of Israel’s Genocide in Gaza. (OR Books, 2025).

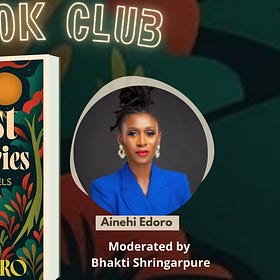

May 10, 2026: Forest Imaginaries: How African Novels Think by Ainehi Edoro

Join our live book discussion on Forest Imaginaries: How African Novels Think by Ainehi Edoro. Moderated by Bhakti Shringarpure.

This is shocking. This experience of blatant racism by AI indicates a hellish future for humanity.